|

8/27/2023 0 Comments Lmms oversampling export

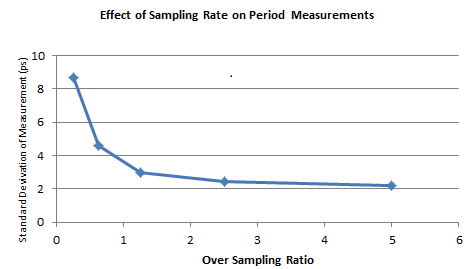

Basically, using 44100 hz samplerate with 2x oversampling is practically exactly the same as using 88200 hz samplerate with 1x oversampling (except, in the second case, the resulting sound file will be 2x larger). Oversampling does what it says: it renders in a higher sample rate than specified, then resamples it down to the specified sample rate. In any case it's pretty safe to use the medium setting, as recommended. I think it currently only affects samples in the AFP (not sure though, will have to check). Interpolation is hard to explain, it's a mathematical algorithm used for resampling sound. But briefly, the same applies here: bigger is better. when you render in a higher sample rate, the calculations used for rendering the sound are more accurate, resulting in a better sound quality.īitrate only applies to ogg files, so we can ignore it. Generally, 96khz is a pretty good sample rate to export, even if you later downsample it to a lower rate. The higher the sample rate, the better the quality. It's how many times per second the sound is sampled to produce the waveform. Samplerate is the "resolution" of the sound file. Audacity is very good for this kind of post-processing. So I always import as wav, do the finishing touches in Audacity (may need some extra limiting/compression, as well as some slight equalization, in addition to normalization). With both audio and image editing, the principle is the same: always use lossless formats for editing and only convert to lossy as the very last step. If you export directly to ogg, you can't edit the resulting sound anymore (or you can but not losslessly, meaning the lossiness cumulates: it's kind of like taking a photo of a jpeg and then saving it again as a jpeg). And also because, well, I'm not sure if the same applies to ogg, but at least mp3 compression causes distortion at near-0dBv levels, so it's best to normalize to a bit less than 0dB, like -0.2dB.

Mainly because you may need to do some post-processing steps, like normalization. Our preliminary computational results reveal that BSGAN outperformed existing borderline SMOTE and GAN-based oversampling techniques and created a more diverse dataset that follows normal distribution after oversampling effect.Firstly, it's always best to import as wav, not ogg. We named it BSGAN and tested it on four highly imbalanced datasets: Ecoli, Wine quality, Yeast, and Abalone.

To address these issues, in this work, we propose a hybrid oversampling technique by combining the power of borderline SMOTE and Generative Adversarial Network to generate more diverse data that follow Gaussian distributions. As an effect, marginalization occurs after oversampling. One of the potential drawbacks of existing Borderline-SMOTE is that it focuses on the data samples that lay at the border point and gives more attention to the extreme observations, ultimately limiting the creation of more diverse data after oversampling, and that is the almost scenario for the most of the borderline-SMOTE based oversampling strategies. Borderline-Synthetic Minority Oversampling Techniques (SMOTE) is one of the approaches that has been used to balance the imbalance data by oversampling the minor (limited) samples. CIP occurs when data samples are not equally distributed between the two or multiple classes. Class imbalanced problems (CIP) are one of the potential challenges in developing unbiased Machine Learning (ML) models for predictions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed